Dorothy, 71, from Tucson, Arizona, was folding laundry when her phone rang. It was her son, Michael she recognized his voice instantly. The slight rasp. The way he says “Mom” with that familiar warmth. He was in trouble. A car accident. Legal fees. He needed $4,500 wired immediately, and please please don’t tell Dad yet.

She was halfway to the bank when Michael called from his actual number to ask about Sunday dinner.

There was no accident. There was no Michael on that first call. There was only a machine that had learned to sound exactly like her son trained on three video clips she herself had shared on Facebook.

Dorothy’s story is spreading in fraud prevention offices, bank compliance meetings, and federal task force briefings across the country. It is not an edge case. It is the new blueprint.

The Technology Behind the Terror

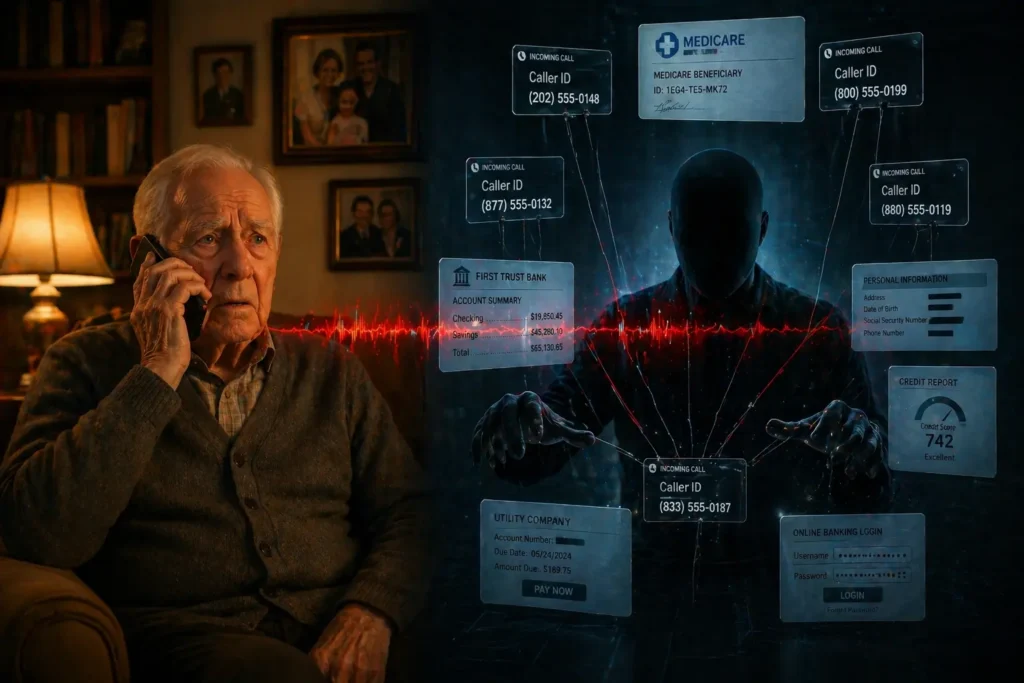

What makes this wave of elder fraud categorically different from anything that came before is a single, chilling capability: AI voice cloning.

Modern voice synthesis software much of it commercially available, some of it freely downloadable can replicate a human voice from as little as eight seconds of audio. That audio doesn’t require hacking. It doesn’t require physical proximity. It lives, openly, on your family’s social media profiles. A birthday video. A graduation reel. A grandson’s TikTok.

Within minutes, a stranger can sound like someone you have loved for decades.

Criminal networks operating across Eastern Europe, Southeast Asia, and increasingly within U.S. borders have industrialized this process. They run what fraud investigators now call “voice farms” operations where dozens of cloned voices are deployed simultaneously against targeted lists of seniors, refined by age, geography, and known family connections scraped from public digital profiles.

The emotional architecture of the scam is deliberate: create urgency, invoke love, manufacture shame, and demand secrecy. Every element is engineered to bypass rational thinking and activate pure parental or grandparental instinct.

Why Banks Are Whispering, Not Shouting

The phrase “quietly warning” is not rhetorical. Financial institutions face a structural tension: publicize the full scale of AI-driven elder fraud, and you risk triggering mass anxiety about the safety of digital banking itself. Stay silent, and customers continue to lose everything.

The result is a calibrated middle path internal training updates, teller protocols, small-print newsletter inserts, and security portal notices that most customers never read.

What those internal warnings reveal is alarming. Front-line bank staff are now being trained to spot behavioral red flags in elderly customers: unexplained large cash withdrawals, flushed or tearful expressions, rehearsed-sounding explanations, and an unusual urgency to complete transactions quickly and quietly.

The FBI’s Internet Crime Complaint Center documented over $3.4 billion in losses among Americans over 60 in its most recent reporting year. Investigators universally agree this number is a significant undercount. Shame, confusion, and fear of appearing vulnerable keep the majority of victims silent.

The Three Scams Dominating 2026

Fraud analysts tracking AI-assisted elder scams have identified three primary variants currently in active circulation:

The Grandparent Emergency Call remains the most emotionally devastating. A cloned family voice. A fabricated crisis. Payment demanded in gift cards, cryptocurrency, or same-day wire transfer all untraceable by design.

The Medicare AI Impersonation deploys a calm, authoritative synthetic voice posing as a federal benefits agent. Seniors are told their Medicare or Social Security account requires “urgent verification.” In the span of one phone call, routing numbers, Social Security digits, and account credentials are harvested cleanly.

The Fraud Department Reversal is the scam’s cruelest irony. Criminals now impersonate bank fraud prevention teams complete with spoofed caller ID displaying the bank’s real number warning seniors that their accounts have been compromised and instructing them to transfer funds to a “secured holding account.” The secured account belongs to the scammer.

The con has learned to wear the uniform of protection.

What Banks Are Actually Doing About It

Behind the polished marble and the deposit slip dispensers, bank branch managers across America are implementing something low-tech and deeply human: the slow-down conversation.

Tellers are coached to gently stall unusual transactions with open-ended questions. “Big plans today?” “Is everything okay?” A few seconds of sincere human attention is often enough to interrupt the trance a scammer has carefully constructed over thirty minutes on the phone.

More formally, some institutions are now offering “safe word” account protections a privately registered code word known only to the account holder and a designated family contact. Large cash withdrawals above a set threshold cannot be processed without it.

The architecture is almost quaint. No blockchain. No biometric scan. Just a word chosen by a grandmother, remembered by her daughter standing between a family’s life savings and a voice that was never real.

The most effective shield against artificial intelligence, it turns out, is a genuinely human one.

What You and Your Family Can Do Right Now

The single most powerful protective measure any family can take costs nothing and takes four minutes. Create a verbal family verification code something personal, absurd, and impossible for an AI model to guess under pressure. A childhood nickname. The name of a first goldfish. A joke only your family tells.

Then have the conversation directly: “If anyone ever calls you claiming to be me in an emergency, ask for the code word first. No exceptions. No explanations.”

Beyond the code:

- Always hang up and call back on a number you already have saved. Never use a number provided by the incoming caller.

- Treat any request for gift cards, wire transfers, or cryptocurrency as an automatic red flag regardless of how real the voice sounds.

- Never trust caller ID alone. Number spoofing technology renders it meaningless.

- Contact your bank directly and ask about elder fraud monitoring services and whether safe word features are available on your account.

The Gap That Is Getting People Killed

Congress has introduced several bills targeting AI-generated fraud and synthetic media misuse, but legislative timelines measured in years cannot pace technology evolving in weeks. In that widening gap, American seniors represent the most targeted demographic in the history of financial crime digitally reachable, emotionally generous, and systematically underprotected.

The voice on the phone will sound like your son. It will know his name, his cadence, the particular way he says he loves you. That is not an accident. That is the product.

Love has always been the deepest vulnerability. Now, for the first time in human history, it can be manufactured on demand.

📌 Read Also:

How AI Is Quietly Rewriting the Rules of Online Privacy in 2026 — AiwalaNews

5 Digital Scams Every American Family Must Know About Right Now — AiwalaNews

© AiwalaNews | Global Tech & Privacy Edition | April 2026