You typed something into Google. Results appeared in under half a second.

That felt simple. It was not.

In that fraction of a second, Google ran your query through a system so complex that even the engineers who built parts of it cannot fully explain how the whole thing works together. It is not one algorithm. It is hundreds of systems, signals, and machine learning models running simultaneously — each one influencing what you see, in what order, and why.

And almost none of it is public.

It Started With One Idea – Links as Votes

When Larry Page and Sergey Brin built the first version of Google in 1998, the core idea was simple. If a webpage had a lot of other websites linking to it, it was probably important. More links meant more trust. They called this PageRank.

Think of it like academic citations. If a research paper is referenced by hundreds of other papers, it is probably credible. PageRank applied that same logic to the web.

For years, this worked well. The web was smaller, the manipulation was limited, and results were genuinely useful.

Then people figured out how to game it.

Entire industries grew up around buying and selling links, stuffing pages with keywords, and tricking Google into ranking low-quality content at the top. Google had to respond. And the way it responded changed everything.

The System That Exists Today

Modern Google Search does not run on one algorithm. It runs on a layered system of signals, filters, and AI models that work together to produce a ranked list in milliseconds.

Here is what Google has publicly confirmed exists inside that system — and what each part actually does.

Crawling and indexing is the foundation. Google’s bots — called Googlebot — constantly browse the web, following links from page to page, reading content, and storing what they find in Google’s index. The index is essentially a copy of a large portion of the internet, organized so Google can search it instantly. If a page is not in the index, it does not exist in Google’s world, no matter how good it is.

RankBrain was introduced in 2015 and was Google’s first major use of machine learning inside search. Before RankBrain, Google matched keywords. RankBrain tried to understand intent — what the person actually meant, not just what they typed. If you search “best way to deal with a difficult boss,” Google now understands you want workplace advice, not a guide on managing people. RankBrain made Google dramatically better at interpreting ambiguous or never-before-seen queries.

BERT came in 2019 and went deeper. It stands for Bidirectional Encoder Representations from Transformers — a language model that understands how words relate to each other in context. The word “to” means something different in “I need a flight to Paris” versus “I want to fly.” BERT helped Google understand those differences at scale.

MUM — Multitask Unified Model — arrived in 2021 and is reportedly 1,000 times more powerful than BERT. It can process text, images, and video simultaneously, understand 75 languages, and draw connections across topics in ways previous systems could not. Ask Google a complex multi-part question today and MUM is likely involved in understanding what you need.

The 200+ Signals Nobody Can Fully List

Beyond the named systems, Google uses over 200 individual ranking signals to score every page for every query. Google has confirmed some of these exist. Others have been inferred by researchers over years of testing. Many remain completely unknown.

The confirmed ones include things like page speed — how fast your page loads, especially on mobile. Mobile friendliness — whether the page works properly on a phone. HTTPS security — whether the site uses a secure connection. Core Web Vitals — a set of measurements around how stable and responsive a page feels to a real user.

Then there is content quality, which is where it gets harder to define. Google’s internal quality rating guidelines — a document it does publish — describe something called E-E-A-T: Experience, Expertise, Authoritativeness, and Trustworthiness. Pages that demonstrate genuine knowledge from real experience, written by people who know what they are talking about, hosted on sites with a track record of accuracy, tend to rank higher.

But here is the honest truth: nobody outside Google knows exactly how E-E-A-T is measured by the algorithm. The guidelines exist for human quality raters who evaluate results after the fact. How those human evaluations feed back into the automated system is not public.

User behavior signals are perhaps the most debated. Google has denied for years that click-through rates and time spent on a page directly influence rankings. Independent research has suggested otherwise. What is clear is that Google has access to enormous amounts of behavioral data — from Chrome, from Android, from Google Analytics on millions of websites — and it would be surprising if none of that informed how results are ranked.

The Part AI Has Changed

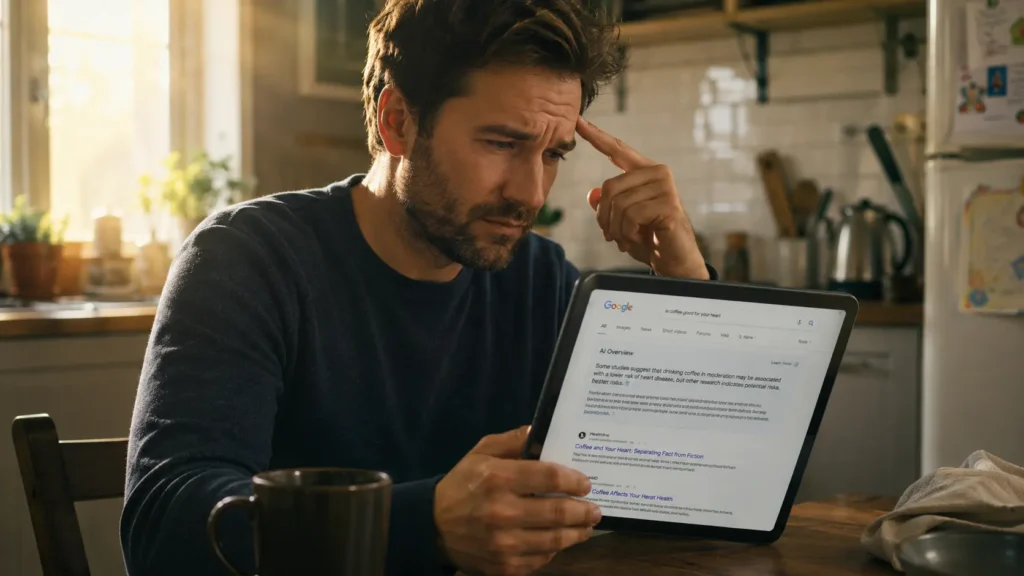

In March 2024, Google began rolling out AI Overviews — the summarized AI-generated answers that now appear at the top of many search results pages.

This changed the game significantly. For the first time, Google was not just pointing you toward a webpage. It was generating an answer itself, pulling from multiple sources, and presenting it as a direct response.

The result is that for millions of queries, users now get an answer without clicking anything. The websites that used to get that traffic get less of it. Publishers, news organizations, and content creators noticed immediately.

AI Overviews also introduced a new problem. On several occasions after launch, the AI generated answers that were factually wrong, occasionally absurd, and in a few viral cases, genuinely dangerous. Google moved quickly to fix the worst examples, but the incidents raised a fundamental question — if nobody fully understands how the ranking algorithm works, and the AI summarizing results is also not fully understood, what exactly is the system guaranteeing about the accuracy of what you read?

The honest answer is: not as much as most people assume.

Why Google Will Never Fully Explain It

Google has a very good reason to keep the algorithm opaque. The moment it becomes fully transparent, it becomes fully gameable.

Every confirmed signal becomes a target. Every published guideline becomes a checklist for manipulation. The entire history of SEO — search engine optimization — is the story of people reverse-engineering Google’s rules and exploiting them until Google changed the rules again.

The opacity is not just secrecy for the sake of corporate control. It is partially a defense mechanism against a web full of people trying to manipulate what you see.

But that opacity also means you are trusting a system you cannot inspect, run by a company with its own commercial interests, to decide what information you have access to every time you search for something.

That is an enormous amount of power concentrated in one place.

And the most unsettling part is this — the engineers at Google are not hiding the algorithm from you because they want to. Some of them genuinely cannot explain why their own system ranks one page above another. The machine learning models at the core of modern Google Search make decisions the way all deep learning systems do — in ways that produce results but resist simple explanation.

Nobody fully understands it. Not even them.

Read also: 🔗 The AI That Reads Your Emotions — and the Companies Already Buying the Data — AIwala News 🔗 Why “Free” Apps Are the Most Expensive Thing on Your Phone — AIwala News